Lake House Implementation

0. Abstract

In progress..

1. Introduction

In progress..

2. Analysis

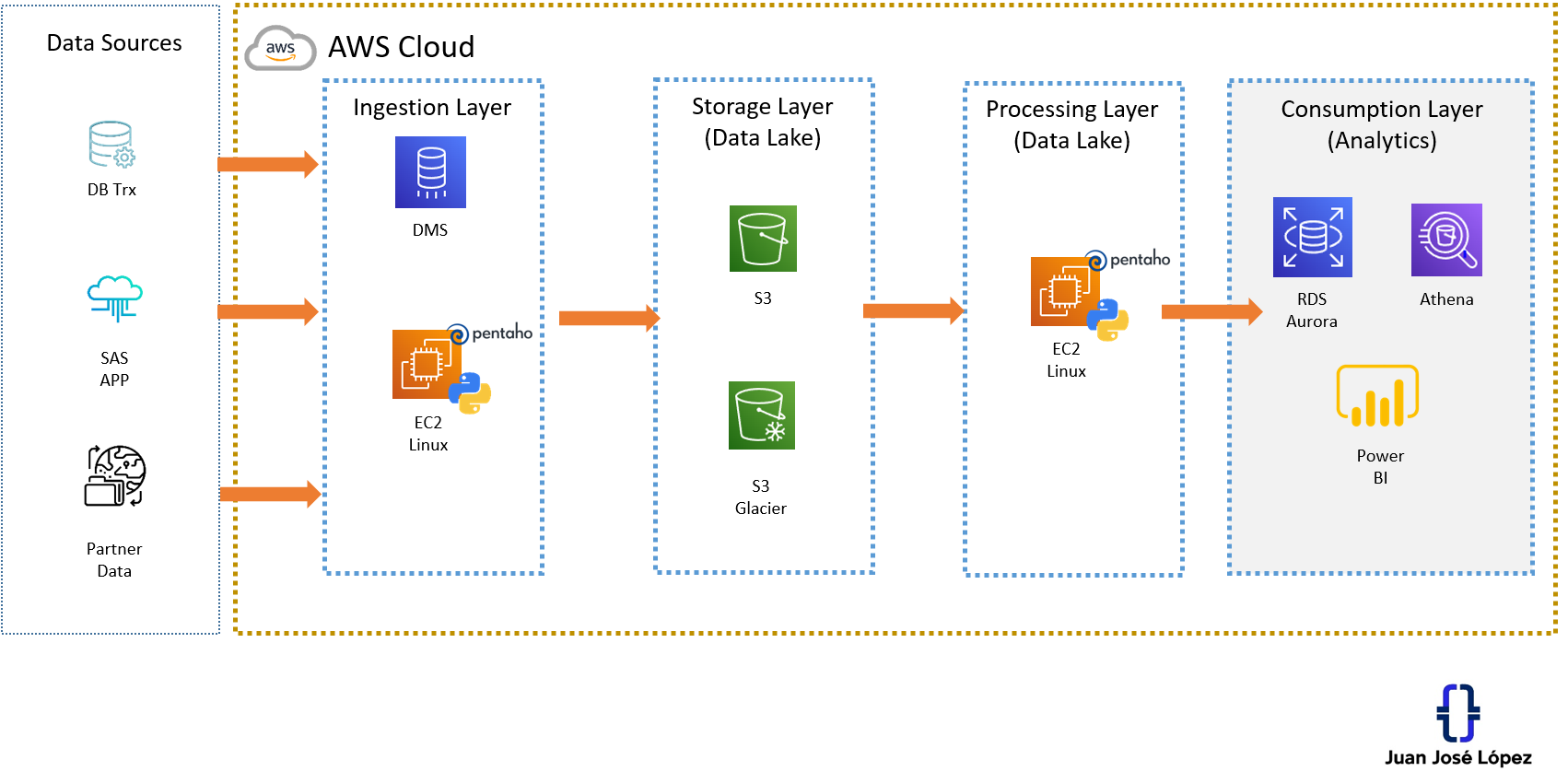

The Lake House could be defined as a data ecosystem that allows you to exploit analytical skills, take advantage of data, and promote the Data-Driven culture in any company. For implementation you have "n" possibilities to create ecosystems, the main ones are those that are developed using cloud services. On this occasion, I will show you a profitable, scalable, and secure solution using various AWS services and Open Source technology.

3. Design

Arquitecture Lake House

4. Implementation

Step by Step:

- Creating AWS Account

- Identification of Data Sources

- Ingestion Layer

- Definition of Region where the resources will be implemented.

- Defining Availability Zone for private and public networks

- VPC and Subnet Configuration

- Configuring EC2 services and DMS service

- Installing Java, Pentaho Integration, Python on EC2

- Configuring connections to data sources

- Storage Layer

- Definition Lake Scheme (Gold/Silver/Bronze) in S3

- Configuring Rules and Policies in S3

- Implementation of Data Pipelines with Pentaho / Python

- Synchronization of Data Sources compatible with DMS

- Processing Layer

- Configuring Data Transformation Pipelines to the Lake

- Consumption Layer

- Setting up WorkSpace Power BI

- RDS implementation

- Setting up Athena with S3

- Implementation of Pipelines Data from Lake to RDS

- Creation of Data Marts in RDS

- Dashboards Implementation

5. Conclusions

In progress..